Data science and data management are crucial components of Two Sigma’s business, so staying closely involved with the broader research community has long been a key priority for the company. Two Sigma has, for example, served for years as an active industry member of Carnegie Mellon University’s Parallel Data Lab (PDL), a leader in storage systems research. Two Sigma has shared datasets with PDL researchers, who used them to study the behavior of clusters under different workloads, resulting in publications at EuroSys’18 and USENIX ATC’18, leading conferences in systems research.

We recently sat down with CMU’s Andy Pavlo, an assistant professor of databaseology in the Computer Science Department at CMU to discuss his current research and outlook on the future of database management. A member of the PDL, his main research areas are the design of main-memory systems using modern hardware support, and the development of self-tuning techniques for databases.

Andy received the ACM SIGMOD Jim Gray Doctoral Dissertation Award in 2014 and a Sloan Research Fellowship in 2018. Recently, researchers from Two Sigma Labs have collaborated with Andy on a paper called Sundial: Harmonizing Concurrency Control and Caching in a Distributed OLTP Database Management System, which presents an in-memory distributed optimistic concurrency control protocol and advises future directions for data centers. The paper appears in the Proceedings of VLDB’18, a top research conference in database systems.

Q: An article from your research group recently received the Best Paper Award at SIGMOD’18. Congratulations. Can you please tell us about the contributions of this work? Why was now the right time for the paper to have an impact, and why do you think it was recognized with such a prestigious award?

The paper was entitled “SuRF: Practical Range Query Filtering with Fast Succinct Tries“. The lead CMU PhD student for this project is Huanchen Zhang. This work was with my CMU colleagues David Andersen and Hyeontaek Lim, and in collaboration with TUM’s Viktor Leis, Hewlett Packard Labs’ Kimberly Keeton, and Intel Labs’ Michael Kaminsky.

This research studies the problem of how to speed up range queries with a filter using as little memory as possible. For example, suppose you have a database of IoT sensor events and you want to know whether any event occurred within a specific time window. You could read every record until you found one, but this would be slow if you have a lot of data and your program had to pull everything in from storage. You could build an index on the records’ timestamps (e.g., B+Tree). This would always provide the correct answer without having to scan all the data, but the data structure would be large.

A potentially better approach is to use an approximate filter that sacrifices accuracy for speed and storage overhead. This filter would tell you whether a record you are looking for might exist within the time window. That is, since it is approximate, it may incur false positives (i.e., that an element exists when it does not). Thus, one would still have to check the original data at the identified location to determine whether the record really exists or not. But the nice property of a filter is that it will never give false negatives (i.e., if the filter says no, it is a no).

Bloom filters are the most widely used approximate data structure today. They can determine whether a key exists in a set with a small fraction of storage overhead per key. They are used in many aspects of DBMSs, including for speeding up storage methods and query processing. A Bloom filter seems like it could solve our example use case above because it is small and fast. But the key limitation with Bloom filters is that they only support point queries (i.e., one can only check whether a single key exists). The only way to use them in our example scenario is to know the exact values you want to check for in the filter, which is pointless because if we had that information we would not need the Bloom filter in the first place.

To overcome this problem, we created the Succinct Range Filter (SuRF). SuRF is a fast and compact filter that supports point queries (i.e., exact key matches) like a Bloom filter, but can also give approximate answers to range queries. This means that for a given range specified by a pair of low/keys, SuRF will tell whether a key exists within that range. It can also tell you the number of keys that exists within that range as well. At its core, SuRF is a trie that encodes digits of keys rather than the entire key (as in a B+Tree). The encoding is succinct and efficient. For 64-bit integer keys, for example, SuRF only requires around 12 bits per key to achieve a 1% false positive rate, and its performance is comparable to Bloom filters. Previous work has proposed succinct tries in the past, but SuRF is the first one that is engineered to support fast look-up operations without incurring a higher storage overhead.

There are still many scenarios in which a Bloom filter is preferable to SuRF. But our hope is that SuRF is used in a variety of domains, within both databases and other types of systems. As such, we have released the complete source code for SuRF on Github under the Apache License: https://github.com/efficient/SuRF

Q: What do you expect will be the long-term effects of machine learning (ML) techniques on DBMS design and implementation and vice versa? Recent research has focused on extending DBMSs to include system support for efficient machine learning, and on applying ML for DBMS self-tuning or more efficient index structures. Do you think these directions are promising and will be, in the medium term, available in commercial DBMSs?

The way I like to think about this is that there are two approaches to using ML to improve DBMSs. The first are methods that alter the runtime behavior of the DBMS’s internal components. Examples of such components include the query optimizer’s cost model or index data structures. These are written by the developers that built the DBMS and are typically controlled by rules or heuristics. Thus, instead of relying on these hardcoded rules, new research has explored ways to learn new policies based on the observed workload.

The second category is on methods for automatically tuning the configuration of the system, such as the database’s physical design, knob configuration, and hardware resources. This is something that DBAs traditionally manage, although there is a long research history of tools to aid them with this process. The goal of this line of work is to relieve humans from many of these laborious tuning tasks, so that they can focus on other, more enriching activities.

There is some overlap between these two categories, but the key difference is that the first is about tuning a single component of the system whereas the second is about taking a holistic view of the overall system. As such, they need different types of training data and APIs to interact with the DBMS. This field is still new and to the best of my knowledge no major vendor is shipping a DBMS that uses these types of ML. IBM introduced a feature for DB2 in the early 2000s called the “Learning Optimizer” (LEO), but this did not use techniques that we would now consider to be part of modern ML practices (e.g., deep neural networks). The cloud vendors are using ML-guided tools to manage their fleets, but these optimizations are deployed at the infrastructure-level and are not about tuning individual databases. Oracle announced in 2017 that they have an autonomous / self-driving database-as-a-service offering, but it currently appears that they are just providing their existing DBA tools in a managed environment (see my personal blog for further analysis).

I am enthusiastic about the promise of using ML to improve DBMSs. The early work in this field is mostly about training deep neural networks (DNNs) to replace existing, human-built methods and components. For example, researchers have proposed using DNNs to replace histograms for estimating distribution of a column’s values. Where this work will eventually go is toward how to build systems that use the information collected about databases, workloads, and the system itself to extract patterns that are non-obvious to humans. That means instead of just replacing existing histograms, the system could train a network that can identify weird correlations between tables/columns or incorporate growth trends in its predictions. I envision a future DBMS that uses such “learned” models in conjunction with existing data structures rather than supplanting them entirely. This would allow it to do more than what is possible today with existing techniques.

The challenge will be how to maintain these models in a dynamic environment while ensuring performance stability and avoiding regressions if the models go awry. As such, I think that it will take several years before we see ML-enhanced components shipped in commercial-grade DBMSs.

Q: Data is no longer static, clean, well-structured, and “monolithic,” and neither are DBMSs. What have we learned in the past ten years that will guide DBMSs research and development for the next ten? What is missing?

I disagree with the premise of your question that data is “no longer” static, clean, or well-structured. The truth is that it was never this way, nor will it ever be. This is not a new phenomenon. People that build DBMSs like to think that their system is the center of the universe when it comes to an organization’s data. Academics sometimes go further and like to think that databases are always clean and in the proper, normal form. The real difference is that now there is more data than before, and more organizations have the types of data management problems that you list.

There are two trends that I find interesting in this space that are primarily being driven by cloud computing. The first is that DBMSs should not be limited to only reading data in their internal file formats. There is a plethora of formats now that are widely used in modern applications and services, such Parquet, ORC, and plain JSON files. A DBMS could convert these files into their internal format, but this would duplicate the data. Instead, it should be able to scan this data in place (also known as in situ processing). There is ongoing research by others on how to incrementally scan these files and build partial indexes on to improve query performance.

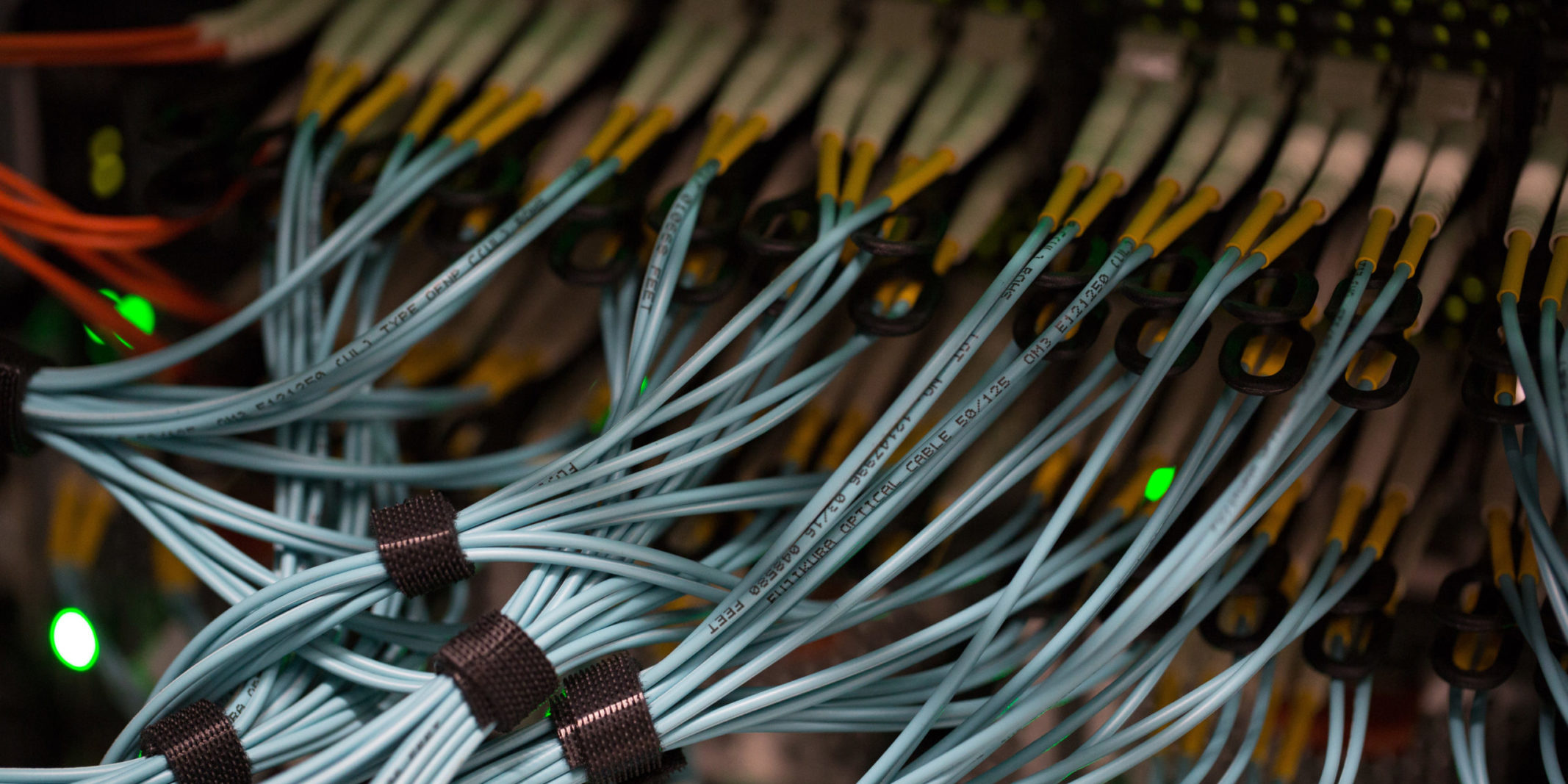

The second trend will be the increased prevalence of shared-disk distributed DBMS. By “shared-disk” I mean a DBMS that uses a distributed storage layer as its primary storage location, such as HDFS or Amazon’s EBS/S3 services. This separates the DBMS’s storage layer from its execution nodes. Contrast this with a shared-nothing DBMS architecture where each execution node maintains its own storage. Shared-disk DBMSs are not new and there are many existing products available today, namely Amazon Redshift, Snowflake, and Oracle Exadata.

These two research areas are linked. An organization with a shared-disk DBMS that can execute queries on files in any possible format allows applications to more easily extrapolate new knowledge from their existing data. Such an operating environment is referred to as the “data lake” or sometimes pejoratively as the “data swamp.”

One interesting question concerns what aspects of a DBMS’s operations should be moved to different parts of the stack. For example, Amazon Aurora moves some logic about transactions into its EBS storage layer. Oracle’s Exadata pushes predicates to the storage layer. In some way this blurs the line between shared-disk and shared-nothing architectures and it opens new opportunities for “serverless” DBMS architectures. This is what I foresee as an interesting research direction for the next decade.